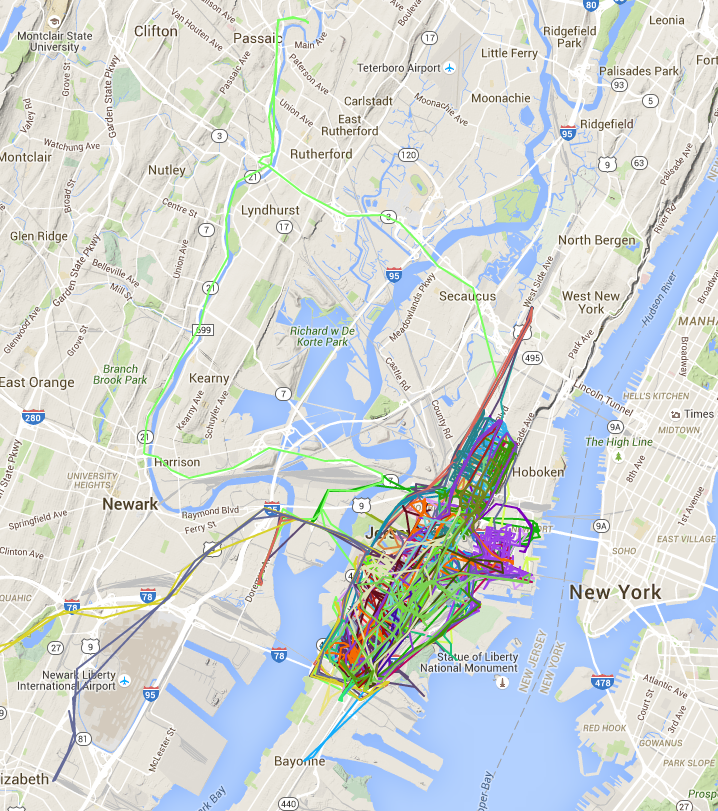

A couple of months ago I took a stab at plotting the Jersey City parking zones after getting frustrated that the only place to see them was a PDF of streets and addresses. Last week someone left an awesome comment pointing out that Jersey City has a bunch of open data available, including a near-real time feed of garbage truck locations, a general open data portal, as well as the ability to request custom data. As a first project I decided to capture the movement of the garbage trucks every minute and then plot the results on a map. The results are interesting - some trucks remain local to Jersey City while others end up venturing as far as Newark Airport. The final visualized routes are at https://dangoldin.github.io/jersey-city-open-data/ and the code is up on GitHub.

The approach I took was straightforward. After going to the real time map I opened the network explorer in order to see the HTTP requests being made to update the map with the latest truck locations. It was a single URL call that was returning a pipe delimited file containing the location of each truck. By writing a simple wget script and setting it as a cronjob I was able to capture the truck locations every minute. After a day’s worth of data I combined the files and removed duplicate lines (for when the trucks stayed in a single location). After that it was simple to use the Google Maps API to draw a route for each individual truck. The neat thing here is that 90% of the work was done through simple shell commands. One command to fetch the data every minute, another to combine them into a single file, and then a few others to sort and dedup the data. By the time I got to coding all I needed to do was convert the data from a pipe delimited file into something that could be consumed by the Google Maps API.